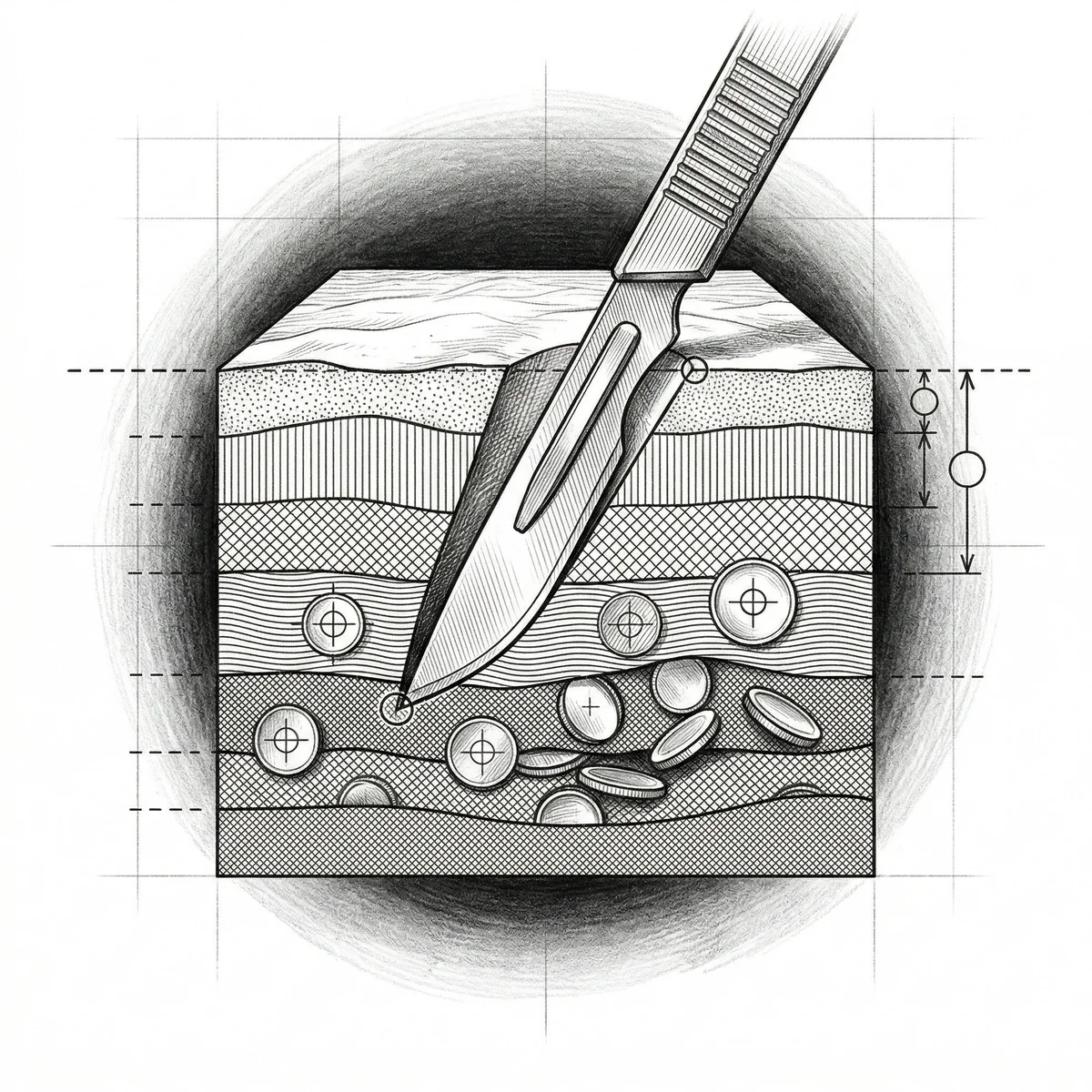

A surgeon in Lincoln, Nebraska spent years sending bushel baskets full of normal gallbladders down to the pathology lab at the leading hospital in town. Not diseased gallbladders. Normal ones. Healthy organs removed from patients who didn’t need the surgery, by a doctor who was paid per procedure. The hospital, operating with the kind of permissive quality control for which community hospitals have always been famous, let it continue for years before removing him from the medical staff.

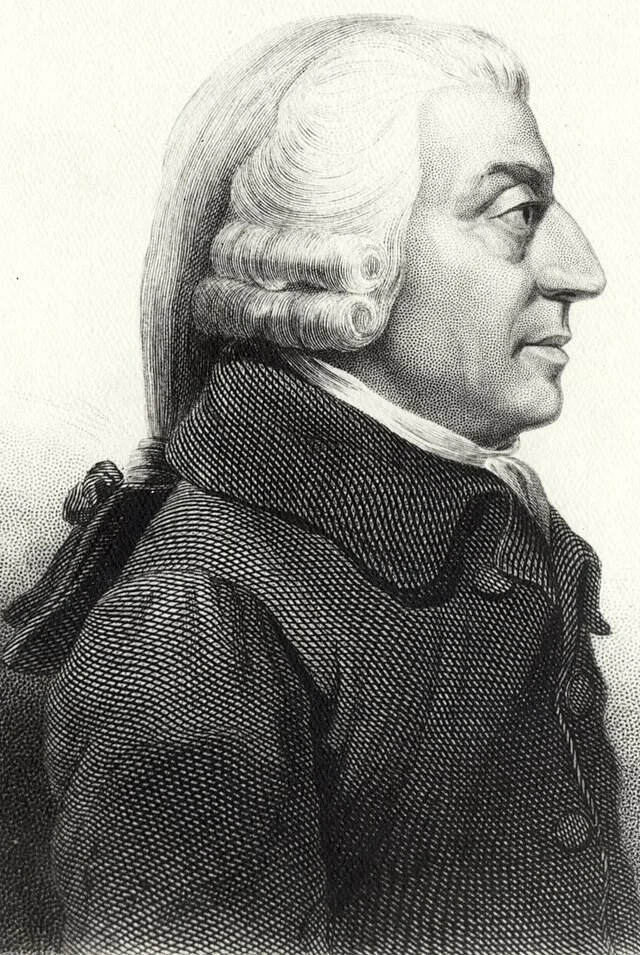

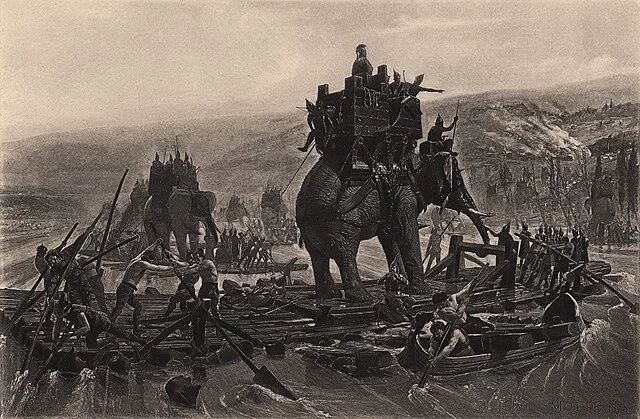

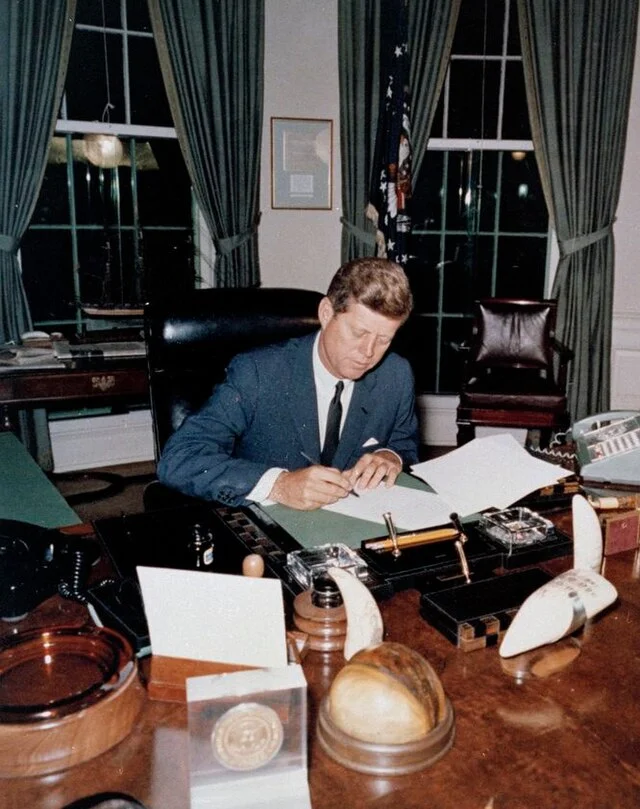

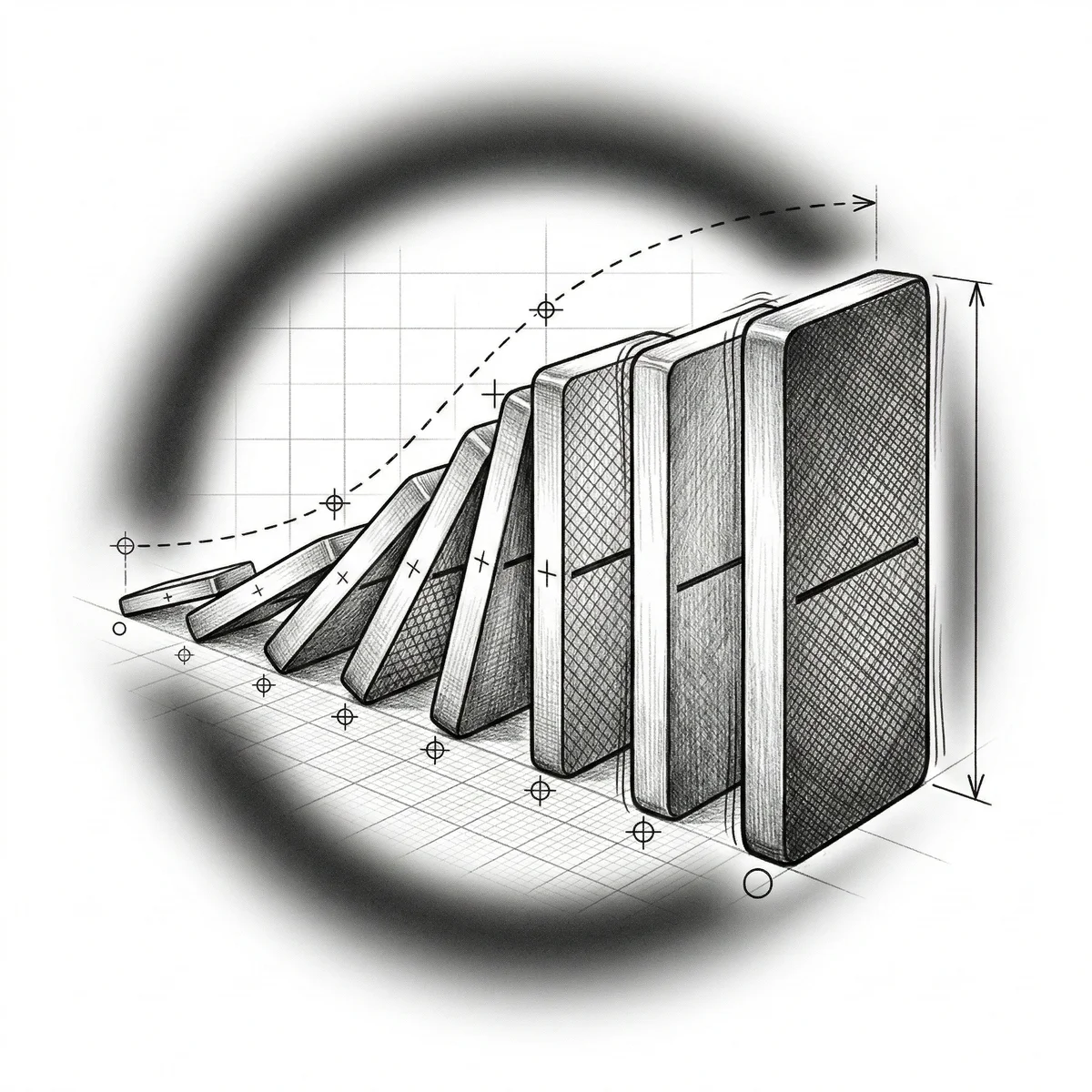

Charlie Munger grew up in that town. The surgeon was a family acquaintance. Munger watched the whole thing unfold, and from it he drew a conclusion that became the first principle of his analytical framework: incentive-caused bias is the most powerful force in professional life, and the person it corrupts most completely is the person doing the corrupting. The surgeon didn’t think he was removing healthy organs. He thought he was being thorough. The compensation formula had rewritten his clinical judgment so seamlessly that he couldn’t detect the distortion from the inside. Intelligence offered no defense. Neither did good intentions. Neither, for that matter, did a medical degree. This is the dark magic of misaligned pay: it doesn’t make you dishonest. It makes you wrong, with total confidence.

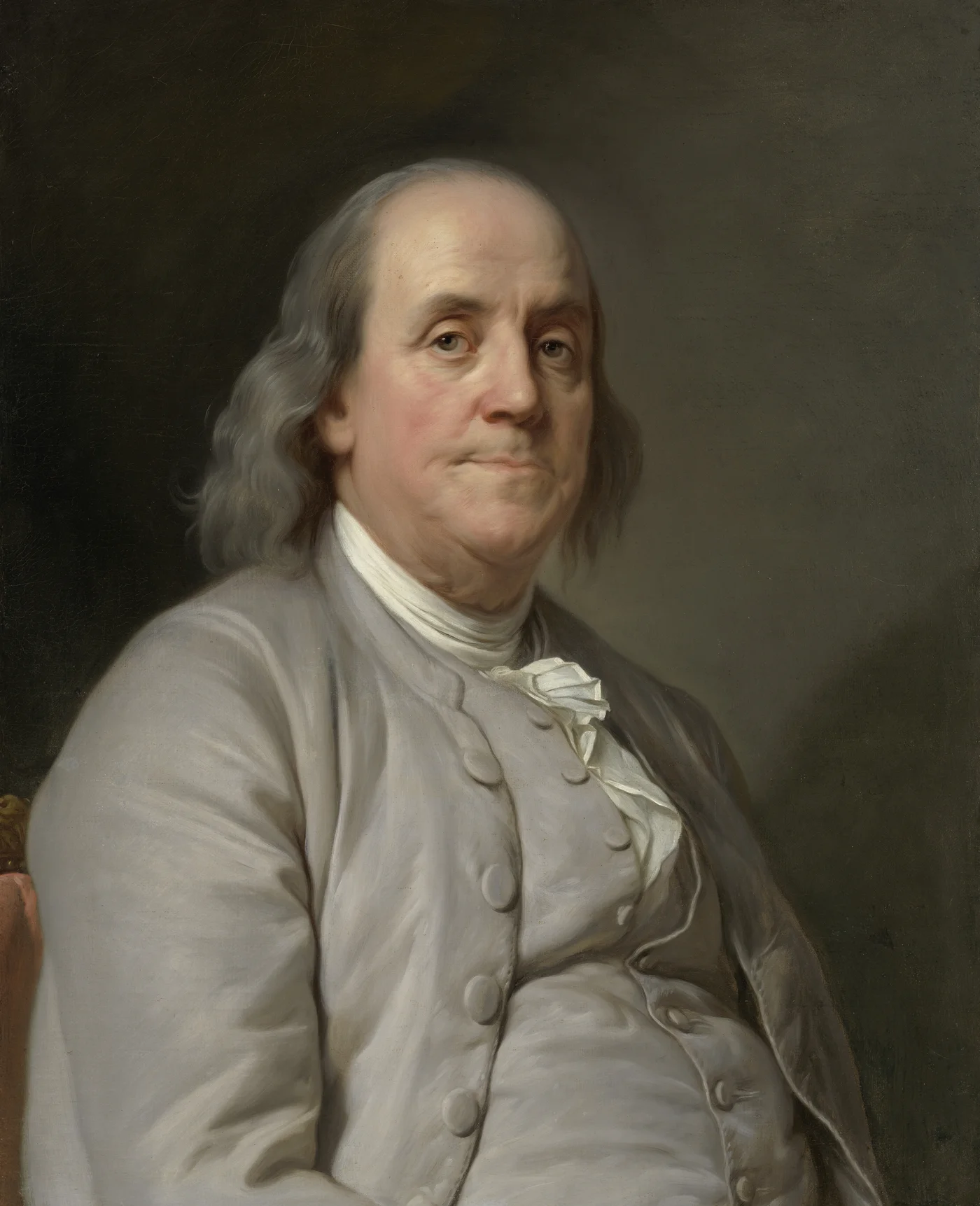

Ben Franklin put the operating principle more bluntly three centuries earlier: “If you would persuade, appeal to interest, not to reason.” Munger quoted Franklin constantly because Franklin had identified the load-bearing insight in nine words. Reason is what people use to explain their decisions. Interest is what actually drives them. The gap between those two forces is where most organizational failures live, where most negotiation mistakes are born, and where most strategic blind spots fester until they metastasize into something expensive. Evolutionary biologists have a term for organisms that signal one thing while doing another: mimicry. The corporate version is called a mission statement.

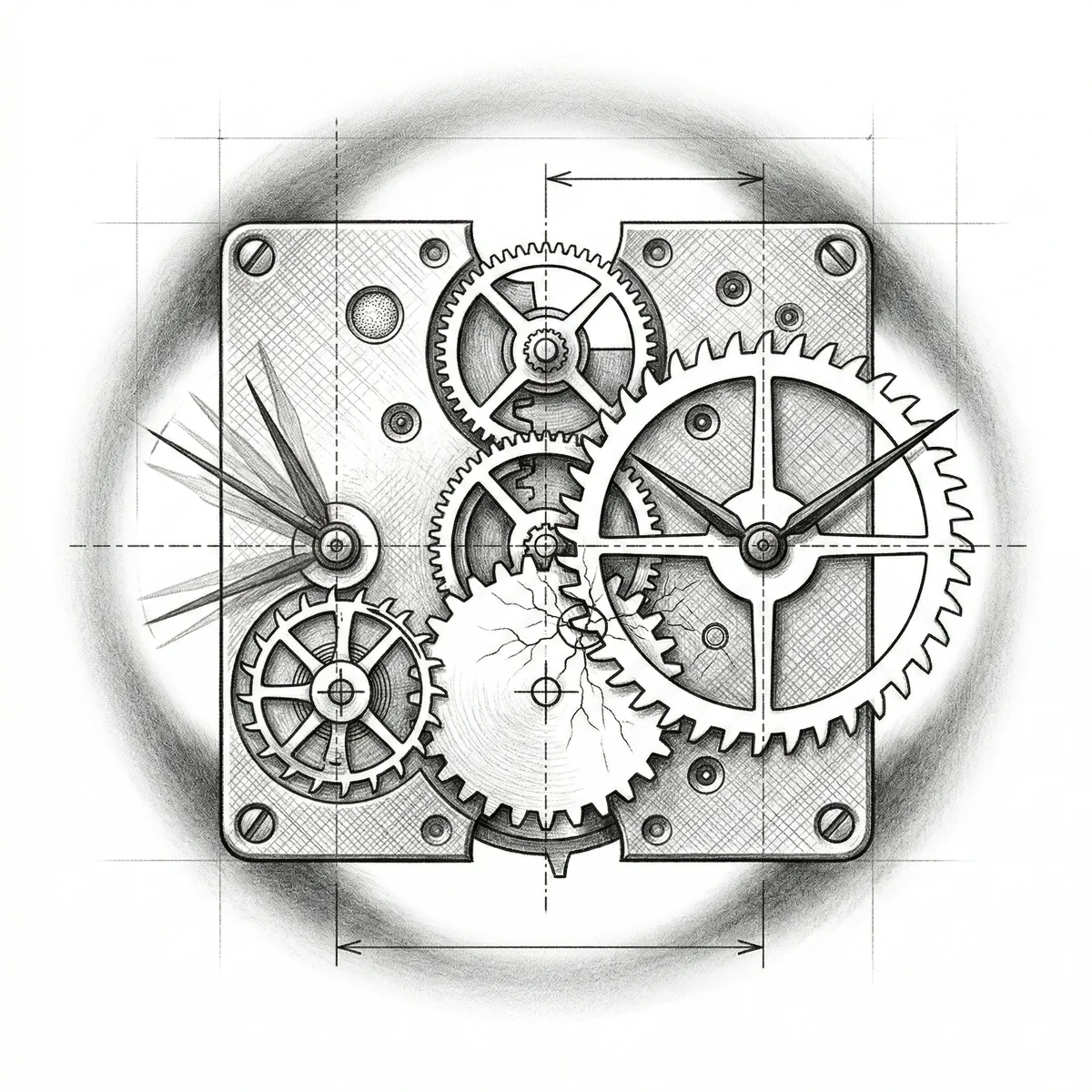

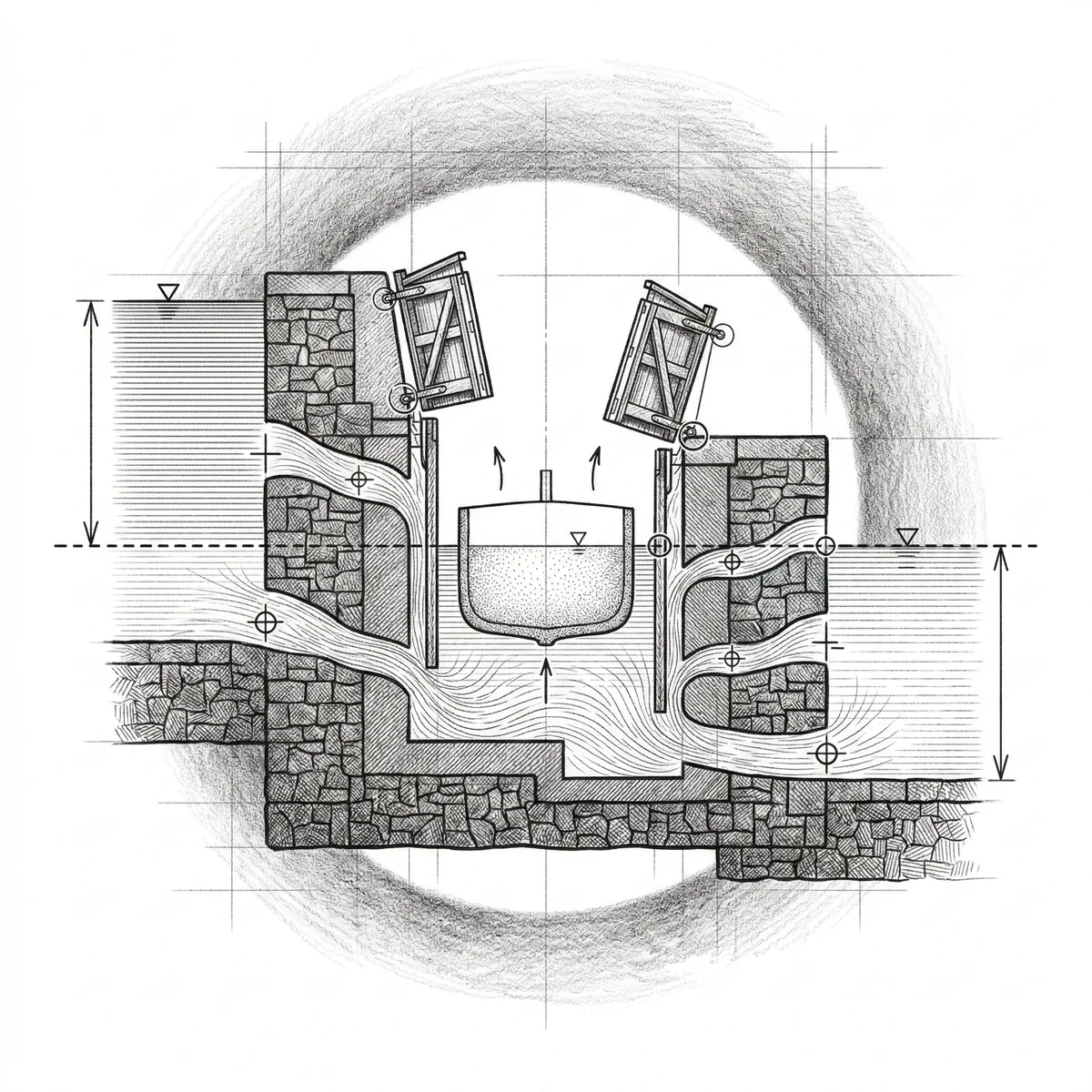

This is the Incentive Audit. It runs on a single diagnostic question: What is every party in this situation actually paid to do? Not what they say they value. Not what they intend. What the architecture of payments and penalties makes almost inevitable. The answer to that question will explain conduct that talent, character, and intelligence cannot.

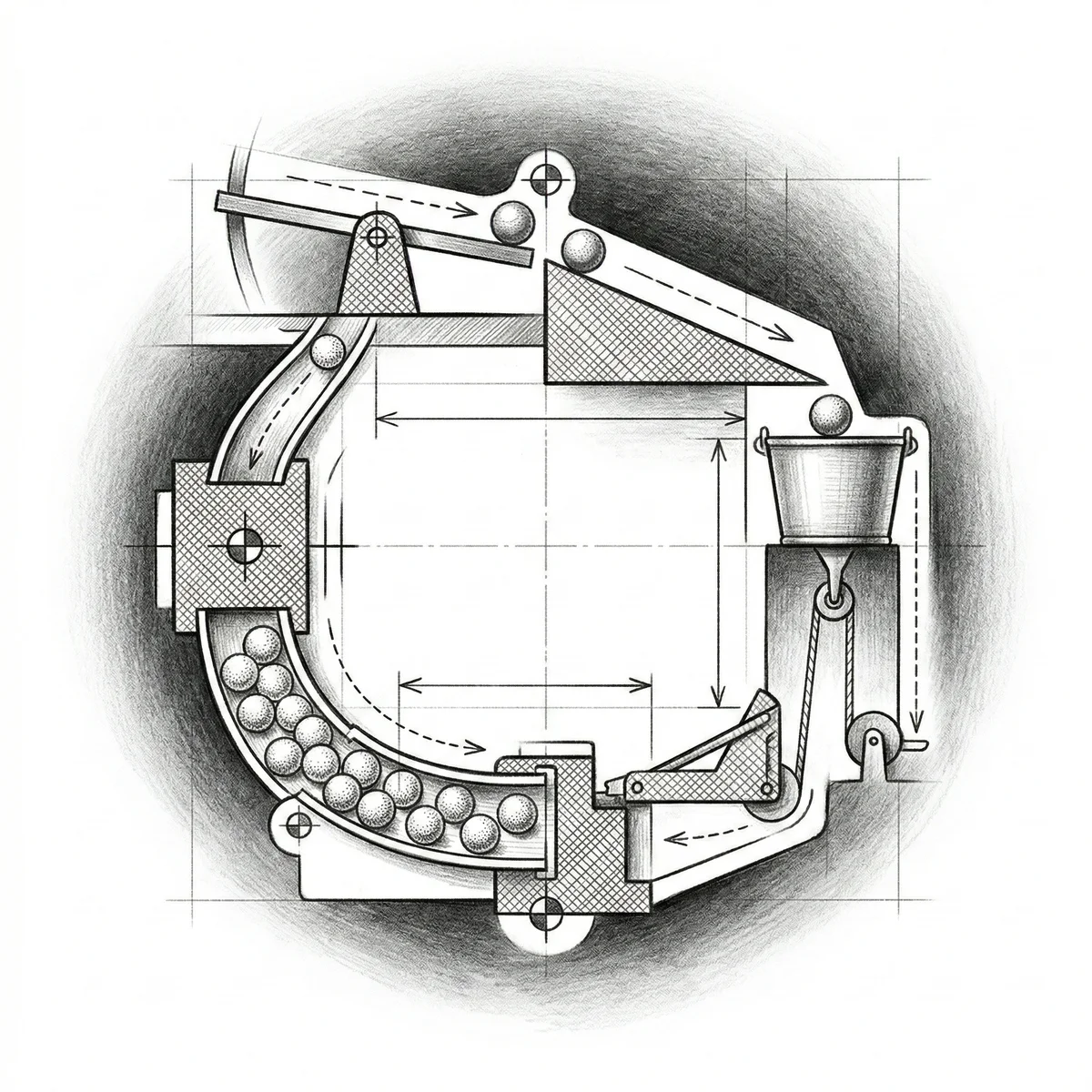

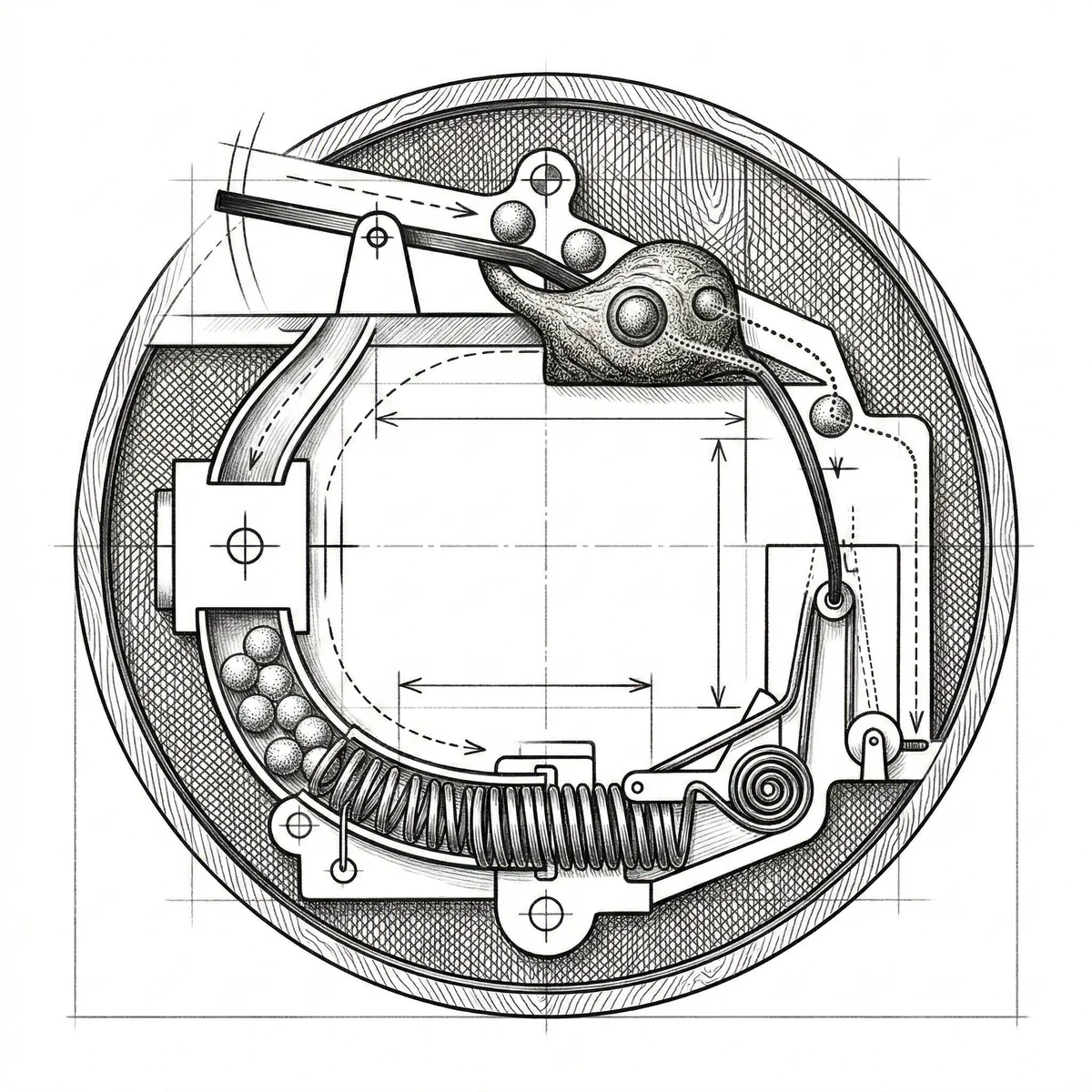

And like the Inversion Stack, it comes in four modes.